Building Self-Improving Skills for Agents

"Not just agents with skills, but agents with skills that can improve over time."

If you've been following the agent space lately, you've probably noticed that SKILL.md files are becoming a de facto standard. The idea is simple: give an agent a folder of markdown files, each containing instructions for a specific task (i.e. summarization, bug triage, code review) and let the agent call on them as needed.

It works brilliantly for demos.

But here's the uncomfortable truth we're all starting to face: skills are usually static, while the environment around them is not.

The Problem: Skills Are Static, While The Environment Around Them Is Not

A skill that worked perfectly a few weeks ago can quietly start failing when:

- The codebase changes

- The underlying model behaves differently

- The kinds of tasks users ask for shift over time

- A tool's API updates or breaks

In most current systems, those failures are invisible. No one knows anything is wrong until outputs start degrading, or until things fail completely. And when something does break, good luck figuring out whether the issue was routing, the instructions themselves, or a tool call that no longer works.

This is the wall we keep hitting. And it's why the "skills folder" approach, while promising, isn’t truly the silver bullet to resolve everything.

The missing piece?

Treating skills as living system components, not fixed prompt files.

The Vision: Skills That Evolve

This is exactly the idea behind cognee-skills. Not just storing skills better or routing them more intelligently. But building a system where skills can actually evolve when they fail or underperform.

Until today, the skills workflow looked like this:

- Write a prompt

- Save it in a folder

- Call it whenever needed

Simple. Effective. But after a certain scale, you start hitting the same problems:

- One skill gets selected too often

- Another looks good on paper but fails in practice

- A specific instruction keeps failing

- A tool call breaks because the environment changed

And the worst part of all is that no one knows if the issue is routing, instructions, or the tool call itself, which leads to manual maintenance and inspection.

What we've built is a closed loop that turns skills from static artifacts into evolving components. Let me walk you through how it works.

Under the Hood: The Self-Improvement Loop

1. Skill Ingestion: Structure Before Intelligence

Right now, your skills folder probably looks something like this:

It's simple, but it's also shallow. With cognee, we can give everything a clearer structure, not just because it looks nicer, but because it also makes searching much more effective. We enrich each skill with:

- Semantic meaning

- Task patterns

- Summaries

- Relationships between skills

All of this gets stored using cognee's Custom DataPoint, turning a flat folder into a navigable knowledge graph. The system doesn't just see files anymore, it understands what skills are for, how they relate, and when they should be used.

2. Observation: Memory Is the Foundation of Learning

A skill cannot improve if the system has no memory of what happened when it ran. That's why every skill execution stores:

- What task was attempted

- Which skill was selected

- Whether it succeeded

- What error occurred (if any)

- User feedback (when available)

With observation, failure becomes something the system can reason about. Each run creates a node in the graph, connected to the skill that produced it, the task that triggered it, and any errors that resulted.

3. Inspection: Finding the Signal in the Noise

Once enough failed runs accumulate, or even after a single critical failure, the system can inspect the connected history around that skill: past runs, feedback, tool failures, related task patterns.

Because everything is stored as a graph, the system can trace recurring patterns behind bad outcomes:

This isn't guesswork. It's evidence-based debugging.

4. Amend: From Observation to Action

When the system has enough evidence that a skill is underperforming, it can propose an amendment to the instructions. This is where the magic happens.

The amendment might:

- Tighten the trigger conditions

- Add a missing edge case

- Reorder steps for clarity

- Change the output format

- Update a tool call signature

Instead of opening a SKILL.md file and guessing what to change, the system proposes a patch grounded in real evidence from how the skill actually behaved in production.

The .amendify() function is the moment where skills stop behaving like static prompt files and start behaving like evolving components.

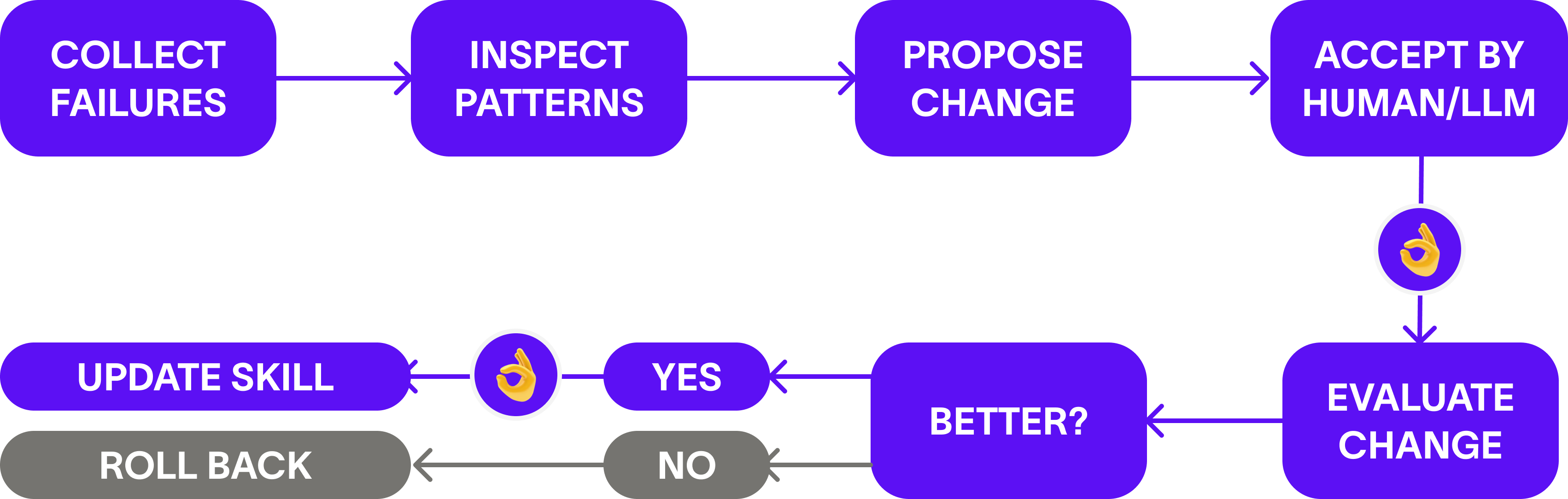

5. Evaluate & Update: Trust but Verify

A self-improving system should never be trusted simply because it can modify itself. That's why every amendment must be evaluated:

- Did the new version actually improve outcomes?

- Did it reduce failures?

- Did it introduce errors elsewhere?

If an amendment doesn't produce measurable improvement, the system rolls it back. Every change is tracked with its rationale and results. The original instructions are never lost.

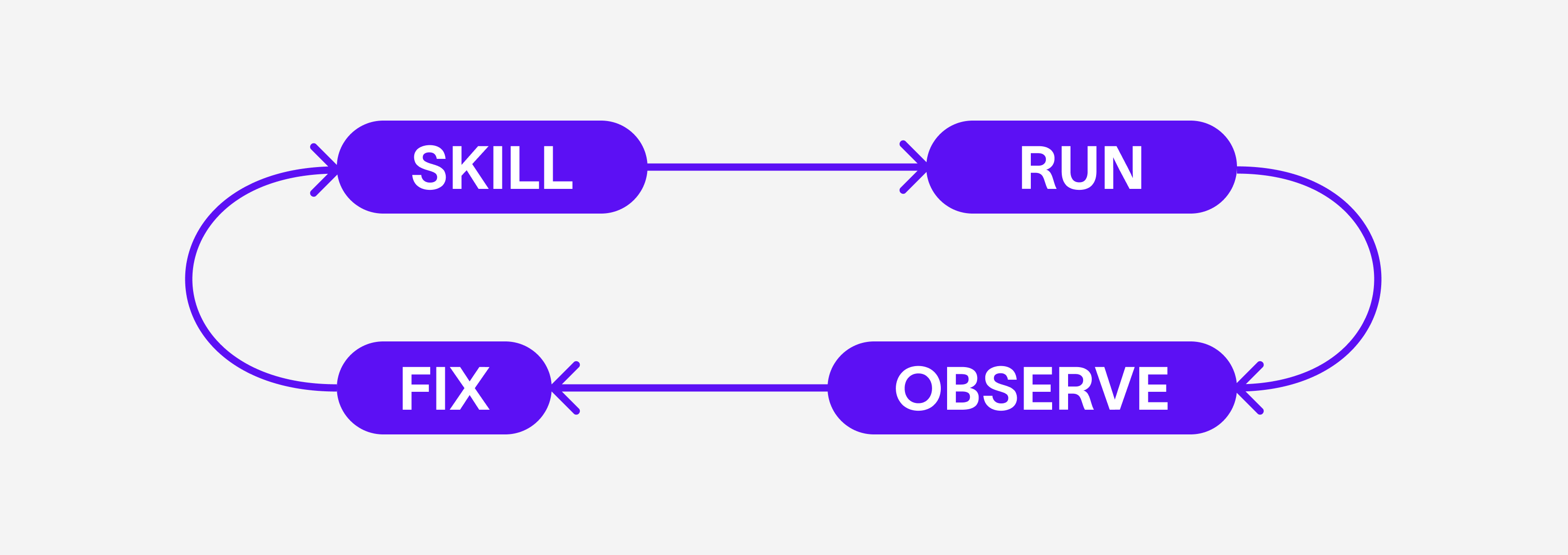

The full loop becomes:

When evaluation confirms improvement, the amendment becomes the next version of the skill. Self-improvement becomes a structured, and auditable process, not uncontrolled modification.

Why This Matters

Skills cannot stay static while the systems around them constantly change. As models, codebases, and tasks evolve, fixed prompt files inevitably degrade

What we've built is a straightforward way to keep skills evolving, automatically, without giving up any of the control or oversight. The system handles the grunt work of observation and analysis, while you stay in control of what changes and when.

The result? Skills that actually get better with use. Skills that learn from failure. Skills that evolve alongside the systems they're part of.

Try It Yourself

- Check out the repo: cognee-skills

- Learn more about Cognee: Cognee

- Join the Discord community: Discord

Building Self-Improving Skills for Agents

ScrapeGraphAI + Cognee: Turn Live Web Data Into a Knowledge Graph